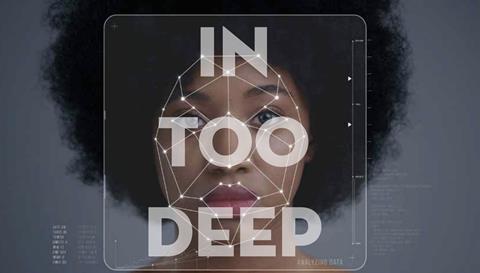

Megan Cornwell explores the implications associated with DeepFakes: the dangerous new technology making fake news even more convincing

During this pandemic there has been a huge appetite for accurate, timely and trusted information. Up until a few months ago, we knew next-to-nothing about the deadly virus sweeping the globe, and in order to keep ourselves and our loved ones safe, we’ve sought out reliable articles, in-depth interviews and opinion pieces by experts who might be able to tell us more. But in our thirst for something tangible and comforting to hold on to during a period of unpredictability, we may have also consumed – and even shared – fake news.

At the end of March when lockdown started, a couple of emails and WhatsApp messages were sent to me by well-meaning friends, colleagues and family members that included false claims about Covid-19. One was a message purporting to have come from the Royal Free Hospital containing spurious details about how to detect and avoid the virus. A voice recording containing similarly spurious advice was shared widely on WhatsApp. The latter is a good example of the increasingly sophisticated forms of fake news being disseminated in our post-truth era. Disinformation is no longer just text or photo-based, it now includes voice notes and video footage.

DEEPFAKES

Into this environment, a new technology has been born that has the potential to up the ante. ‘DeepFakes’ are what’s known as ‘synthetic media’, which harness the power of artificial intelligence (AI) to swap or digitally alter faces in existing photographs or videos. Footage doctored using so-called DeepFake software is so convincing that it can be almost impossible to correctly discern what you’re viewing. Ever wondered how Disney was able to bring back a young Carrie Fisher as Princess Leia at the end of Rogue One: A Star Wars Story? Or how the rapper Kanye West recently surprised his wife, Kim Kardashian-West, with a hologram and message from her deceased father? By using DeepFake technology you can train a machine to recognise and copy the speech patterns and mannerisms of a person (alive or dead) as long as there is existing video footage of them that can be fed into a computer.

Up until now, DeepFakes have mainly been used in entertainment and pornography: AI firm DeepTrace found that of the 15,000 manipulated videos circulating online last September, 96 per cent were pornographic; most mapped faces of famous actresses onto naked people in X-rated films. While this itself is deeply disturbing, there’s an equally nefarious way DeepFakes are being used in the political realm. In 2018 a video appeared on the internet of Donald Trump addressing the people of Belgium on the subject of climate change. “As you know, I had the balls to withdraw from the Paris Climate Agreement,” he said, “and so should you.”

The footage provoked hundreds of comments, many expressing outrage that an American president would have the gumption to involve himself in an issue of national policy concerning another country. The video’s creators – a Flemish political party who created the video to raise awareness of the problem of climate change (it acted as a signpost to a petition for investment in renewable energy) – later said they assumed the poor quality of the footage would alert viewers to its inauthenticity. “It is clear from the lip movement that this is not a genuine speech by Trump,” a spokesperson for the party told American media outlet Politico.

It opens the door to even more corrosive D levels of disinformation and distortion

The problem was, it didn’t. The relatively amateur DeepFake convinced many that they were watching a real speech from the White House. Imagine, then, that video footage appeared online today showing, let’s say, Pope Francis encouraging Catholics to replace the crucifixes in their churches with statues of Mary. Or the Archbishop of Canterbury telling people the pandemic isn’t real and that they should stop wearing masks and refuse a vaccine. Or what if a famous evangelist announced their conversion to Islam? How would we respond? In outrage and incredulity would we rush to share the video with friends across our social media accounts?

Rev Peter Crumpler, former communications director at the Church of England and author of a recent book that looks at fake news from a Christian perspective (Responding to 25 Post-truth), says: “The key issue is that up until now, in general, we have been able to trust videos of people speaking, expressing their views or taking specific actions. Now, thanks to new technology, increasing numbers of people can create a good-quality video that shows someone doing or saying something that they didn’t do or say. “It opens the door to even more corrosive levels of disinformation and distortion than we’ve seen before. It affects everyone who has ever watched or shared a video online. It’s a potential game-changer in terms of credibility and trust.”

While much has been made of the responsibility of social media platforms and tech giants with the resources to tackle this growing area of corruption, as well as the need for a legislative response, the reality is that without ordinary people sharing this material with their networks, there would be no market for these fakes. Those behind the subterfuge rely on you and I to watch and pass content on. They know that we’re more likely to share something we agree with, or which confirms our political or faith perspective, so being vigilant about our biases is a good place to start.

THE CAMERA NEVER LIES

If you’ve ever watched Facebook’s Mark Zuckerberg brag about having “total control of billions of people’s stolen data”, or watched Game of Thrones’ Jon Snow apologise for the disappointing ending of the TV show, or Barack Obama call Donald Trump “a total and complete dip****”, then you’ve seen a DeepFake. Jeremy Kahn, previously a senior tech reporter at Bloomberg, has described the phenomenon as “fake news on steroids” because of its particular power to deceive. Unlike other kinds of media output, video content has a specific immediacy that engenders trust, so when seeing is no longer believing it can distort our perceptions of reality. “In a climate of disinformation, people become confused, then cynical, and ultimately, we find ourselves believing no one and giving up on the whole political process,” explains Rev Crumpler.

DeepFake technology and fake news more generally increases our distrust of information and provides fertile ground for conspiracies to thrive. It also emboldens those in powerful positions to question the authenticity of real news that is unfavourable, or which may cost them votes or support. World leaders could be tempted to follow Donald Trump’s example of calling anything he doesn’t agree with “fake news”. A recent Guardian article on DeepFakes asked readers to consider the following three scenarios.

When a recording of Donald Trump boasting about grabbing women’s genitals was leaked to the media during the 2016 election campaign, he later suggested the tape had been doctored (despite originally apologising). Last year, figures close to Prince Andrew cast doubt on the authenticity of a photo taken with Virginia Giuffre – who claims the royal was among the powerful friends of Jeffrey Epstein who used her for sex (an allegation he denies). Cameroon’s minister of communication also dismissed as fake news a video that Amnesty International believes shows the country’s soldiers executing civilians.

THE IMPLICATIONS FOR DEMOCRACY Elections can be a particularly febrile area for distortion and lies – as we’ve seen recently in the US – but the techniques and tactics being deployed are becoming more sophisticated and convincing, and we must be on our guard. Russian interference in the elections of western democracies has been well-documented in recent years, and in particular the fabricated articles and disinformation campaigns that have reached millions of Americans across social media.

Some commentators have suggested that a well-timed forgery could sway an election result or even incite war. Consider a DeepFake depicting Democratic vote counters destroying Republican ballotsor North Korean leader Kim Jong-un ordering a nuclear weapon strike against the US. Among examples of media manipulation during the previous American election in 2016 was a video that supposedly showed Hillary Clinton having a seizure. The original footage, taken by reporter Lisa Lerer, who at the time worked for the Associated Press, was of the then-Democratic nominee shaking her head vigorously for a few seconds after being bombarded by questions from journalists in a café. Some of the videos that were later shared online showed Clinton jerking and appearing startled for much longer, looking at best confused and at worst deranged.

The footage wasn’t created using DeepFake technology – it was, in fact, an example of a ‘ShallowFake’, a video that has been presented out of context or doctored by humans with simple editing tools. What it demonstrated was the power of a strategic edit to retell a story and stoke division. Trump supporters liked and shared it as ‘evidence’ of Clinton’s ailing health and unsuitability for office.

A WELL-TIMED FORGERY COULD SWAY AN ELECTION RESULT OR EVEN INCITE WAR

Writing for the Chicago Tribune, Lerer said: “Two months later, that innocuous exchange has become the fodder for one of some Trump supporters’ most popular conspiracy theories: her failing health. Where I saw evasiveness, they see seizures.” In a similar story from last year, Donald Trump tweeted an edited version of a video of Democrat House Speaker Nancy Pelosi that made her look as if she were slurring her words. Trump shared the manipulated footage with his millions of followers on Twitter, saying: “PELOSI STAMMERS THROUGH NEWS CONFERENCE”.

His post garnered more than 96,000 likes and 31,000 retweets. I was shown the Clinton footage by a journalist colleague at the time of the election as an example of why people shouldn’t vote for the Democratic nominee. The editor, who is also a Christian, even inferred that, spiritually, there could be more going on than meets the eye (hinting at some kind of demon possession). When I consider the fact that a journalist who, frankly, should know better, was uncritically consuming this media, I wonder where that leaves ordinary people who haven’t been trained to analyse information with a sceptical eye or to verify and corroborate facts.

What’s more, if this was a ShallowFake, then where DeepFakes are concerned, we can all be duped. That’s why the big tech companies are in a kind of arms race to develop technology that detects forgeries and why media companies, such as the BBC and Reuters, are creating and investing in fact-checking teams. But, like the game Whac-A-Mole, fake news will continue to resurface, especially when it serves a dark purpose. What the Clinton footage and the interaction with my journalist colleague showed me was the importance of questioning the images, articles and videos that are shared with us (see box).

We all must ask ourselves: what are the things I fear that others might exploit for political (or other) purposes? What beliefs do I hold that, when confronted, could sway my decision-making? As a Christian, how can I be someone who upholds the truth? For anyone who believes in a spiritual realm and who knows that evil exists, that footage of Clinton was disturbing. Even if we recognised it as a fake it had the potential to sow seeds of doubt. Ultimately that is the problem with fake news. Before long we will stop believing the real news, and journalism – the ‘Fourth Estate’ responsible for holding those in power to account – will have lost much of its power for good.

There have been some exciting advances in the fight against DeepFakes, namely a new Microsoft tool that will help news organisations detect subversion. But in a world where truth is increasingly becoming a rare commodity, we all have a responsibility to critically question the media we consume and seek out news from reputable sources. We’re dealing with an epidemic of fake news that’s becoming harder and harder to spot. Christians must do everything we can to seek after truth – after all, someone once said, it would set us free.